Onto enemy terrain.

Capture all their food!

Enough of defense,

Onto enemy terrain.

Capture all their food!

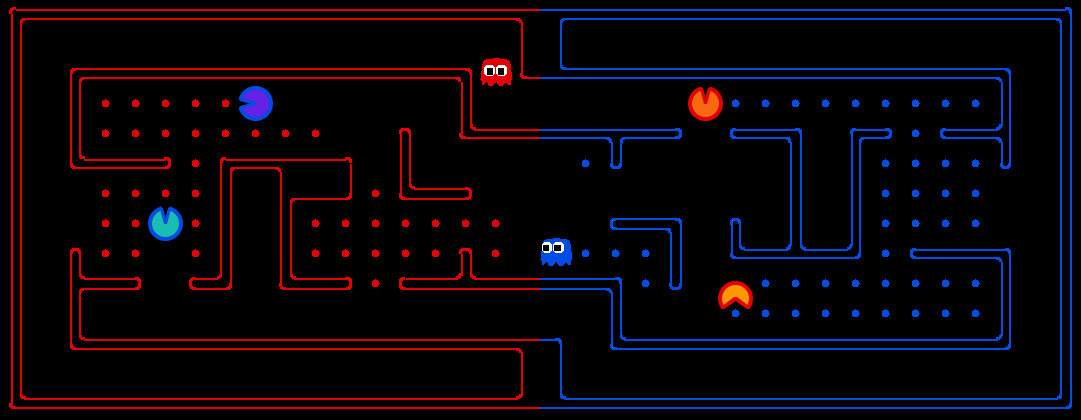

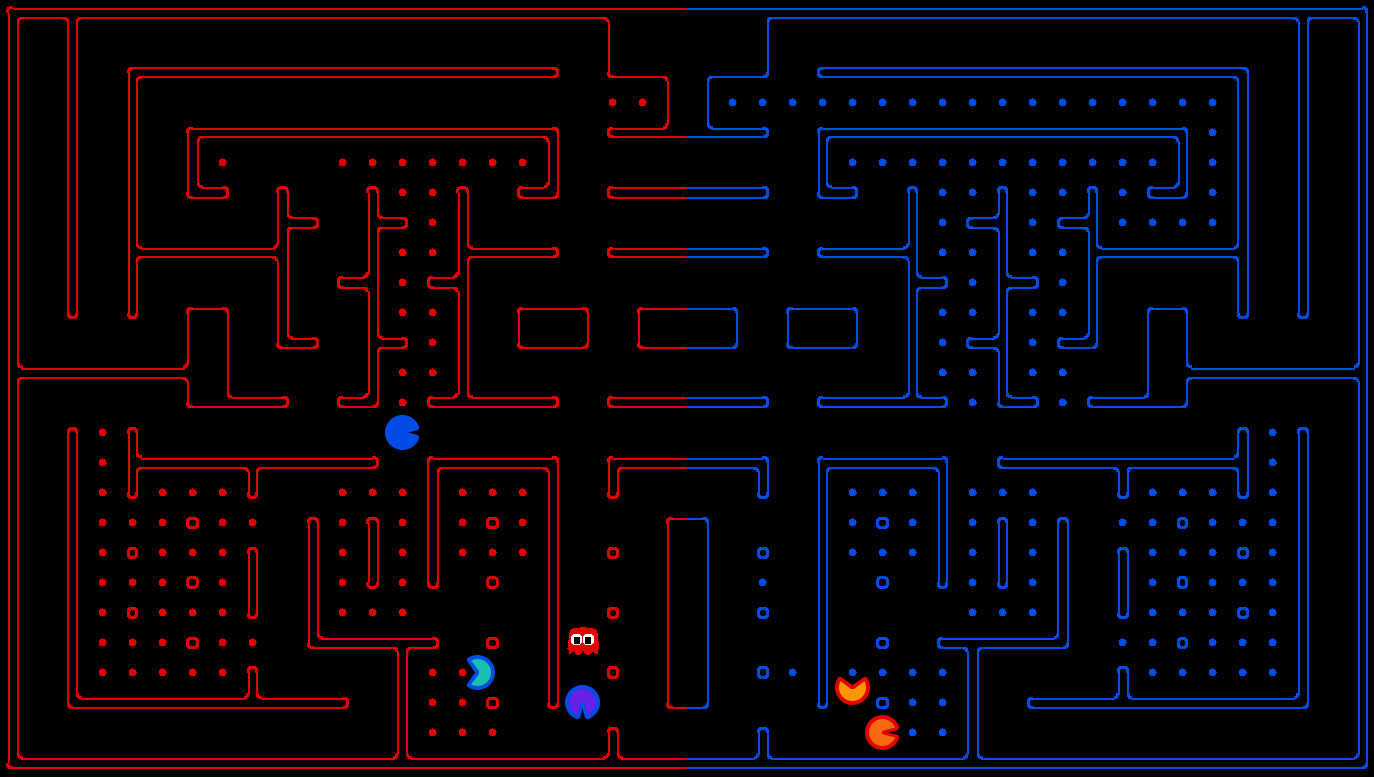

The optional project involves a multi-player capture-the-flag

variant of Pac-Man, where agents control both Pac-Man and ghosts

in coordinated team-based strategies. Your team will try to eat

the food on the far side of the map, while defending the food on

your home side. The contest code is available as a zip archive.

You may work with up to four people from the class. This

project is completely optional and is worth up to 2.5% extra

credit toward the final course grade.

| Key files to read: | |

capture.py |

The main file that runs games locally. This file also describes the new capture the flag GameState type and rules. |

pacclient.py |

The main file that runs games over the network.

(Note: network play is currently disabled) |

captureAgents.py |

Specification and helper methods for capture agents. |

| Supporting files: | |

game.py |

The logic behind how the Pac-Man world works. This file describes several supporting types like AgentState, Agent, Direction, and Grid. |

util.py |

Useful data structures for implementing search algorithms. |

distanceCalculator.py |

Computes shortest paths between all maze positions. |

graphicsDisplay.py |

Graphics for Pac-Man |

graphicsUtils.py |

Support for Pac-Man graphics |

textDisplay.py |

ASCII graphics for Pac-Man |

keyboardAgents.py |

Keyboard interfaces to control Pac-Man |

layout.py |

Code for reading layout files and storing their contents |

Scoring: When a Pac-Man eats a food dot, the food is permanently removed and one point is scored for that Pac-Man's team. Red team scores are positive, while Blue team scores are negative.

Eating Pac-Man: When a Pac-Man is eaten by an opposing ghost, the Pac-Man returns to its starting position (as a ghost). No points are awarded for eating an opponent. Ghosts can never be eaten.

Winning: A game ends when one team eats all but two of the opponents' dots. Games are also limited to 3000 agent moves. If this move limit is reached, whichever team has eaten the most food wins.

Computation Time: Each agent has 1 second to return each

action. Each move which does not return within one second will

incur a warning. After three warnings, or any single move taking

more than 3 seconds, the game is forfeit. There will be an initial

start-up allowance of 15 seconds (use the registerInitialState

function).

Observations: Agents can only observe an opponent's

configuration (position and direction) if they or their teammate

is within 5 squares (Manhattan distance). In addition, an agent

always gets a noisy distance reading for each agent on the board,

which can be used to approximately locate unobserved opponents.

BaselineAgents

that are provided:

python capture.py

A wealth of options are available to you:

python capture.py --helpThere are six slots for agents, where agents 0, 2 and 4 are always on the red team and 1, 3 and 5 on the blue team. Agents are created by agent factories (one for Red, one for Blue). See the section on designing agents for a description of the agents invoked above. The only agents available now are the

BaselineAgents.

They are chosen by default, but as an example of how to choose

teams:

python capture.py -r BaselineAgents -b BaselineAgentswhich specifies that the red team

-r and the blue team

-b are BaselineAgents.

To control an agent with the keyboard, pass the appropriate option

to the red team:

python capture.py --redOpts first=keysThe arrow keys control your character, which will change from ghost to Pac-Man when crossing the center line.

Baseline Agents: To kickstart your agent design, we have

provided you with two baseline agents. They are both quite bad.

The OffensiveReflexAgent moves toward the closest

food on the opposing side. The DefensiveReflexAgent

wanders around on its own side and tries to chase down invaders it

happens to see.

Directory Structure: To create an agent, create a

subdirectory in the teams directory with the same

name as your agent, and put the code for your agent in it. Then

properly fill out config.py with your team name,

authors, agent factory class, and other options, and place it in

the directory along with the rest of your files. For your

reference, we have provided a sample config.py

configured for the BaselineAgent. The BaselineAgent

directory itself is inside the teams directory. Make

sure to pick a unique team name!

Interface: The GameState in capture.py

should look familiar, but contains new methods like getRedFood,

which gets a grid of food on the red side (note that the grid is

the size of the board, but is only true for cells on the red side

with food). Also, note that you can list a team's indices with getRedTeamIndices,

or test membership with isOnRedTeam.

Finally, you can access the list of noisy distance observations

via getAgentDistances. These distances are within 6

of the truth, and the noise is chosen uniformly at random from the

range [-6, 6] (e.g., if the true distance is 6, then each of {0,

1, ..., 12} is chosen with probability 1/13). You can get the

likelihood of a noisy reading using getDistanceProb.

Distance Calculation: To facilitate agent development,

there is code in distanceCalculator.py to supply

shortest path maze distances.

To get started designing your own agent, we recommend subclassing

the CaptureAgent class. This provides access to

several convenience methods. Some useful methods are:

def getFood(self, gameState):

"""

Returns the food you're meant to eat. This is in the form

of a matrix where m[x][y]=true if there is food you can

eat (based on your team) in that square.

"""

def getFoodYouAreDefending(self, gameState):

"""

Returns the food you're meant to protect (i.e., that your

opponent is supposed to eat). This is in the form of a

matrix where m[x][y]=true if there is food at (x,y) that

your opponent can eat.

"""

def getOpponents(self, gameState):

"""

Returns agent indices of your opponents. This is the list

of the numbers of the agents (e.g., red might be "1,3,5")

"""

def getTeam(self, gameState):

"""

Returns agent indices of your team. This is the list of

the numbers of the agents (e.g., red might be "1,3,5")

"""

def getScore(self, gameState):

"""

Returns how much you are beating the other team by in the

form of a number that is the difference between your score

and the opponents score. This number is negative if you're

losing.

"""

def getMazeDistance(self, pos1, pos2):

"""

Returns the distance between two points; These are calculated using the provided

distancer object.

If distancer.getMazeDistances() has been called, then maze distances are available.

Otherwise, this just returns Manhattan distance.

"""

def getPreviousObservation(self):

"""

Returns the GameState object corresponding to the last

state this agent saw (the observed state of the game last

time this agent moved - this may not include all of your

opponent's agent locations exactly).

"""

def getCurrentObservation(self):

"""

Returns the GameState object corresponding this agent's

current observation (the observed state of the game - this

may not include all of your opponent's agent locations

exactly).

"""

Restrictions: You are free to design any agent you want. However, you will need to respect the provided APIs. Agents which compute during the opponent's turn will be disqualified. In fact, we do not recommend any sort of multi-threading.

Have fun! Please bring our attention to any problems you discover.